Essay

No. 042

16 April 2026

14 min read

Strategy

When the map becomes the territory and optimization runs out of road

Read the 1st episode here, 2nd episode, 3rd episode, 4th episode

“Would you tell me, please, which way I ought to go from here?” “That depends a good deal on where you want to get to.” “I don’t much care where.” “Then it doesn’t much matter which way you go.” “…So long as I get somewhere.” “Oh, you’re sure to do that, if only you walk long enough.”

Lewis Carroll, Alice’s Adventures in Wonderland

There is a branch of applied mathematics concerned with a deceptively simple question: how to find the best possible solution in a complex landscape. The difficulty is not movement, but direction. It is easy to improve locally : to follow the steepest path, to make continuous progress according to the signals available. It is much harder to know whether you are climbing the highest hill.

Most optimization systems, left to their own logic, converge toward local optima. They reward what can be measured, reinforce what already works, and reduce variance over time. The system improves, but within a narrowing frame. It becomes increasingly efficient at reaching solutions that are good enough, and increasingly incapable of discovering those that are fundamentally better.

For the past decade, SaaS marketing has operated as such a system. Deterministic playbooks, attribution models, and performance loops promised a form of convergence: follow the process, and results would follow. And they did. Locally.

This article is not about whether optimization worked. It did. It is about what happens when an entire industry converges — efficiently, predictably, and at scale — on the same hill, chosen not by strategy, but by the structure of the system itself.

The Cheshire Cat’s logic is Goodhart’s Law in narrative form. If you have not defined the destination, every direction produces movement, and movement feels like progress. “You’re sure to get somewhere, if only you walk long enough” is convergence itself: a thousand companies walking with increasing efficiency, all arriving somewhere and mistaking arrival for achievement.

We did not just optimize marketing. We redefined it as an optimization problem. That reframing, repeated across an industry over more than a decade, transformed a strategic function into an operational one. The previous articles in this series traced how that happened: how pipeline became a target rather than an indicator, how measurement infrastructure encoded that shift and made it structurally expensive to reverse, how attribution manufactured the quarterly evidence that made the shift feel rational, and how the financial bill accumulated in silence while the dashboards reported progress. At each stage, a proxy replaced the objective it was supposed to measure, and by the time anyone thought to question it, the proxy had outlived the conditions that made it useful.

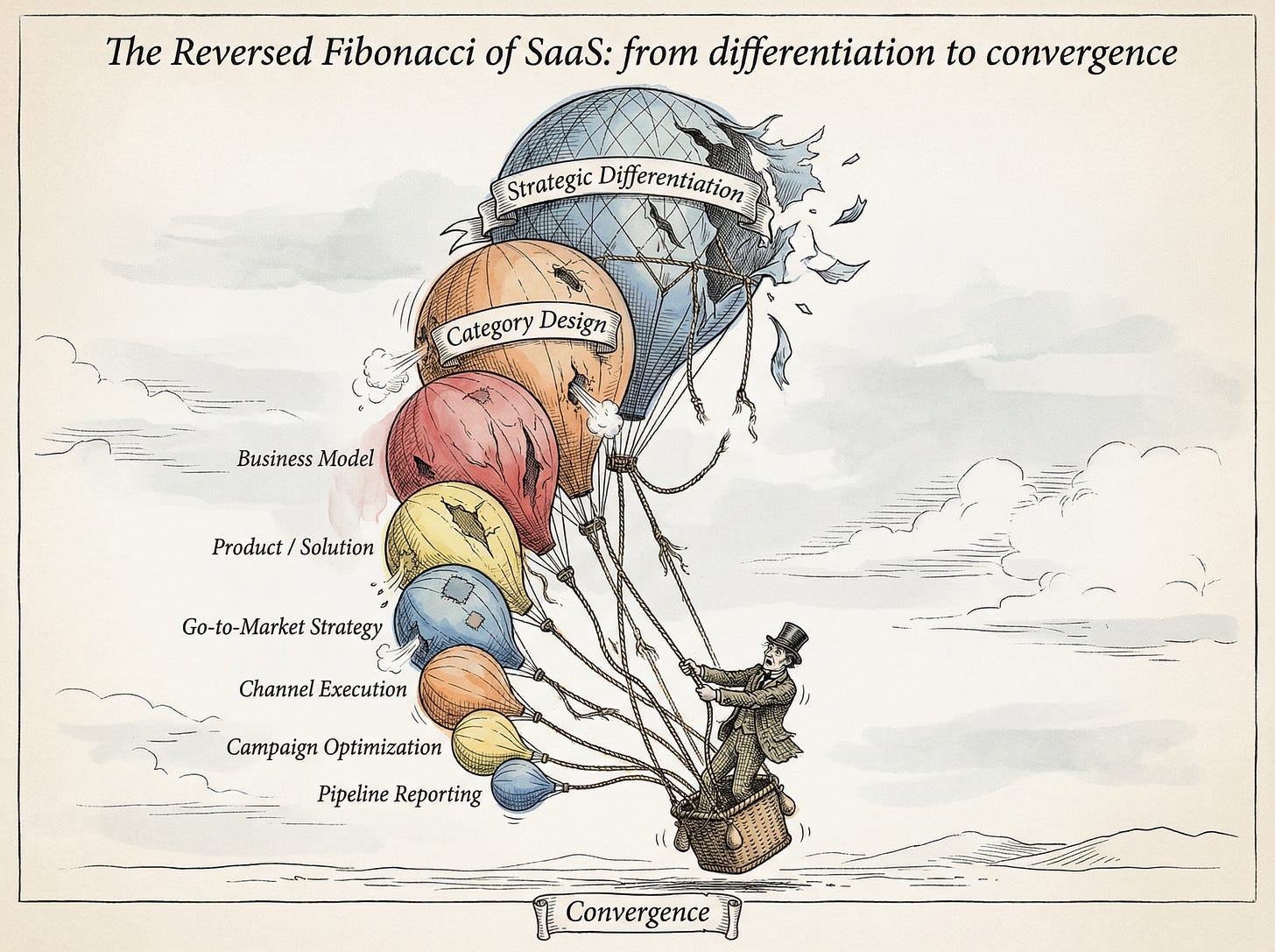

The convergence was never a conspiracy. It was an emergent property of a system in which every participant was rationally pursuing local optimization. Thousands of companies independently adopted the same demand generation playbook: gated content, webinar-to-demo funnels, ABM sequences, standardized MarTech stacks, dashboard-driven decision making. Each believed it was competing. In aggregate, the industry was converging.

Created with Gemini 3 Pro (Nano Banana Pro)

Differentiation did not disappear because it was rejected. It disappeared because it was not required. The system did not reward it, measure it, or protect it. Over time, it became optional, then expensive, then invisible. Companies did not converge by copying each other. They converged by not choosing a direction. Execution improved. Direction stayed undefined.

What happens when near-zero marginal cost execution is applied to a system already optimized for sameness?

The symptoms show up in every QBR across horizontal SaaS, though they are rarely traced to a common cause. Acquisition costs rise as companies bid on the same keywords, run the same channels, and target the same accounts with interchangeable messaging. Win rates decline as buyers arrive without conviction, forcing sales to manufacture differentiation in real time, one deal at a time. Sales cycles lengthen as decisions feel riskier precisely because the vendors look interchangeable. Value propositions expand and blur until category boundaries dissolve: analytics tools becoming data platforms, marketing automation becoming all-in-one suites, each product stretching toward every adjacent product. Consolidation follows.

Not all SaaS companies are equally exposed to what follows. The convergence hit hardest where differentiation was thinnest. Mid-tier horizontal SaaS performing generic, repeatable tasks — basic analytics, content tools, marketing automation, project management, workflow automation — proved most vulnerable. When AI can perform those tasks at near-zero cost and acceptable quality, the value proposition collapses unless supported by deeper advantages: proprietary data, ecosystem integration, switching costs, or trust.

More resilient segments occupy a different position. Vertical SaaS remains embedded in workflows, regulations, and domain-specific data. Infrastructure and usage-based platforms see AI increase usage through their systems rather than around them. Platform companies with network effects and proprietary data have moats that execution cannot replicate. The pattern is consistent: AI compresses weak value and reinforces structural advantage.

The system scaled efficiency. It also removed the need to choose direction. In a stable environment, that was sufficient. In stable systems, destinations could be borrowed : follow the demand waterfall, replicate the playbook from the last successful exit. That condition is ending.

This is not the first transition the industry has faced. Cloud, mobile, and SaaS all rewrote the rules of execution. But it is the first where the system no longer tolerates drift. In previous shifts, companies could remain partially wrong and still recover over time. Now, each step in the wrong direction compounds faster than it can be corrected. The system does not absorb error anymore. It accelerates it.

That recovery depended on something the system no longer provides: the capacity to absorb lag. AI did not create the system: it exposed its limits and accelerated their consequences.

The structural fragility predates generative AI by years. But what happens when near-zero marginal cost execution is applied to a system already optimized for sameness?

Execution drops to machine speed. Content, campaigns, personalization, testing — produced instantly and infinitely. Variation becomes theoretically infinite and practically irrelevant, because every company gains the same capabilities at the same time. Best practices spread instantly and are replicated before advantage can compound. Advantage duration collapses, and what used to create differentiation now creates saturation.

The entire machinery of modern marketing — dashboards, attribution models, testing frameworks, quarterly planning cycles — was built on optimization logic. It assumes that actions produce measurable, repeatable outcomes, but that assumption no longer holds.

And then something more consequential happens. More activity generates more interference. Signals dilute, attribution weakens, outcomes become harder to trace back to causes. As execution scales, understanding degrades: the system becomes easier to operate and harder to read.

For years, this gap remained tolerable. Organizational learning and market change evolved at roughly the same pace. The gap existed, but it could be absorbed. Companies could afford to be slightly off course without immediate consequence. The system absorbed lag. That tolerance has expired. Technological change now follows an exponential curve. Organizational learning remains largely linear, constrained by incentives, structures, and human processes. At first, the gap is small. Then it widens. Eventually, it becomes structural. Beyond that point, the gap does not stay constant. It widens faster than it can be closed. And a system that cannot close the gap does the only thing it knows how to do: it compensates for the absence of learning by optimizing what it already knows.

More subtly, the nature of the system itself begins to shift. It no longer behaves like something that can be decomposed, measured, and improved step by step. The rules that made optimization reliable begin to dissolve, not gradually, but all at once.

Optimization requires stable cause-and-effect. That condition is gone. The map did not just replace the territory. It became the only territory the system could navigate.

The system did not simply become harder. It changed category and with it, the logic of action. What could once be treated as a complicated problem — analyzed, decomposed, optimized — now behaves as a complex one, where cause and effect can only be understood in retrospect. In a complicated system, optimization is the correct response: measure, test, improve, scale. In a complex system, the optimization logic has no stable foundation and a different approach is needed: explore, interpret, adapt.

The entire machinery of modern marketing — dashboards, attribution models, testing frameworks, quarterly planning cycles — was built on optimization logic. It assumes that actions produce measurable, repeatable outcomes. That assumption no longer holds consistently enough to rely on.

When causality weakens, execution remains possible, but understanding becomes scarce. The spiral defunded that understanding. AI makes it more valuable, not less. When outputs can be generated infinitely, what matters is not production, but the insight behind it: market intelligence, competitive understanding, positioning clarity, the credibility that makes an output worth acting on. In a market where every piece of content can be generated at scale, trust is the one asset that cannot be automated, manufactured, or accelerated. It compounds slowly and is destroyed quickly. The organizations that built it before the convergence hold an advantage that appreciates as everyone else floods the market with fluent content carrying no earned authority.

But here lies a more fundamental constraint. The system now requires learning. The people inside it were formed for execution. Hiring favored attribution fluency. Promotions rewarded dashboard literacy. Careers were built on navigating stable environments and producing predictable outputs. The people who need to lead the reconstruction were, in significant part, formed by the system the reconstruction is meant to replace, and that system is still actively reinforcing itself. Performance reviews still measure pipeline. Hiring still screens for playbook fluency. Promotions still reward predictability.

Organizations sense dysfunction and respond predictably. They look for someone to make the old system work again: faster, more efficiently, now with AI. More campaigns. More automation. More measurement.

The spiral tightens.

The result is not an immediate collapse, but a transitional state without resolution. The old system continues to operate — metrics, pipelines, playbooks, forecasts — while the conditions that made it effective no longer hold. A new approach is required, but not yet fully formed, institutionalized, or widely accepted.

Organizations are caught between two logics. One still governs performance: predictable pipeline, measurable ROI, execution against known benchmarks. The other reflects reality: unstable causality, shifting signals, non-repeatable outcomes. Neither fully dominates.

In that space, the default response is not reinvention, but intensification: more optimization, more execution, more of what used to work, applied with greater sophistication. This is not denial. It is structural inertia. The system does not know how to do anything else.

What appears as progress often follows a different logic.

What looked like compounding growth was the opposite. Each cycle of optimization reduced variance, narrowed exploration, and removed alternative paths. Fewer alternatives are tested, the range of possible moves contracts, and the system becomes more predictable but less adaptive. Each step does not open the future. It closes it. Every iteration makes the next one less creative, less differentiated, less capable of responding when the environment shifts. Optionality declines. Diversity disappears. The organization becomes highly efficient at navigating a world that is getting smaller, until it no longer knows how to operate outside it.

This is not compounding. It is a reversed Fibonacci sequence: a dynamic in which each step forward reduces what can come next. SaaS did not just optimize for growth. It optimized in a way that systematically reduced its ability to grow differently.

When the environment changes, the system has fewer ways to respond: not because it failed to act, but because it acted too consistently in the same direction. If the need to change is this visible, the question becomes: why the correction does not happen ? The answer is not awareness. It is permission.

Because the constraint is not only strategic. It is financial. Most SaaS companies are funded and evaluated on their ability to produce predictable returns: pipeline, growth rates, payback periods, efficiency metrics. These indicators assume a stable world. They require outcomes to be measurable, attributable, and repeatable. They are designed for a world in which cause and effect can be reliably linked.

Experimentation does not fit easily within this model. It produces uncertain outcomes, delayed returns, and ambiguous signals. It looks inefficient, even when it is necessary. From a financial perspective, learning appears as volatility.

The tension is structural. The system requires exploration, but rewards predictability. Capital does not simply prefer optimization. It enforces it. Venture capital fund cycles, PE return expectations, and quarterly earnings pressure all define value through short-loop, attributable outcomes. When the board evaluates marketing on pipeline contribution, experimentation is not just risky: it is irrational within the system’s own definition of performance. Companies do not fail to experiment because they do not understand experimentation. They fail because the way their investors and boards define success makes experimentation look like waste.

The same metrics used to evaluate performance — pipeline, conversion rates, ROI — reinforce the behaviors that created the problem. Optimization is not just a habit. It is the path of least resistance within the system’s definition of value.

Companies fail to experiment because the way their investors and boards define success makes experimentation look like waste.

This creates a closed loop. Capital expects predictability. Predictability requires optimization. Optimization reduces learning. Reduced learning increases fragility. And fragility, when it appears, is interpreted as a failure of execution rather than a limitation of the system itself.

The system cannot easily correct itself, because the conditions that created the problem are the same ones used to judge performance.

When volatility is structural, the response cannot be to eliminate it. It must be to build for it.

There is a Chinese proverb: “When the winds of change blow, some people build walls and others build windmills.” Some organizations build walls : systems optimized for efficiency, predictability, and resistance to change. Others build windmills : systems designed to learn from volatility rather than shelter from it.

Windmills are not philosophical. They are structural. They detect signals, extract learning, and reallocate resources continuously. They are not optimized for output, but for understanding.

AI reframes here : not as an output multiplier, but as an intelligence tool. Execution becomes abundant, interpretation becomes the constraint, and the bottleneck shifts from production to meaning.

AI handles the fast work at a speed no team can match: content, data processing, campaign mechanics, reporting. That frees human time for the slow work that determines outcomes: market intelligence, competitive understanding, positioning clarity, the question behind the question. It can also extend that work: not just by accelerating execution, but by challenging assumptions, generating alternative frames, asking better questions across teams and models, and expanding the space of possible answers. Whether organizations use that capacity for genuine strategic understanding or reinvest it in more campaigns is the single most consequential choice of the AI era. If the objective has not changed, the freed time gets recaptured by the convergence, more of the wrong thing at higher speed. Fast execution, slow thinking. The order matters.

Playing to learn is not a motivational posture. It is, structurally, the reintroduction of variance into a system that has been optimized to eliminate it.

That is a capital allocation decision, not a cultural one. It determines what the organization is allowed to try, and what it is forced to repeat. It means funding exploration whose outcomes are uncertain, and protecting it from the metrics designed to kill it. Most organizations do not fail to learn because they lack intent. They fail because their capital structure does not permit it.

AI handles the fast work at a speed no team can match. That frees human time for the slow work that determines outcomes. Whether organizations use that freed time for strategic thinking or reinvest it in more campaigns is the most consequential choice of the AI era.

This implies a shift in orientation. From pipeline optimization to signal discovery. From execution scale to learning velocity. From channel performance to advantage construction: brand, distribution, data, trust. From fixed planning to continuous adaptation.

These are not best practices. They are consequences of operating in a different type of system. Best practices assume stable cause-and-effect. When that assumption dissolves, what worked before spreads faster and stops working faster. The organizations that treat these shifts as a new playbook to adopt will discover that the playbook is already obsolete by the time it is implemented.

There is an asymmetry here worth noting. Companies that move early toward learning-oriented systems gain an advantage: not the advantage of being first, but the advantage of learning faster. They accumulate pattern recognition, signal literacy, and adaptive capacity while others are still optimizing the old system. But the advantage comes with real costs: evangelization, institutional resistance, and the career risk of advocating for uncertainty in organizations that reward certainty. The advantage is compounding learning while others compound convergence.

The separation emerging in SaaS is not between companies that use AI and those that do not. It is between systems optimized for execution and systems designed for learning.

Execution-optimized systems produce convergence, diminishing returns, and increasing fragility. Learning-optimized systems produce differentiation, adaptation, and resilience.

Most companies will remain in the former. Not because they lack awareness, but because shifting requires tolerating uncertainty that their financial structure, their governance, and their institutional incentives do not allow. The ability to learn is not a function of intelligence. It is a function of what the organization is permitted to not know for a period of time.

Playing to learn is a capital allocation decision. It means funding exploration whose outcomes are uncertain, and protecting it from the metrics designed to kill it.

A smaller number will adapt. Not by executing better, but by understanding faster. Not by scaling what worked, but by discovering what matters now. This is not a marketing change. It is a company-wide reckoning with how the organization learns. The convergence this series diagnosed at the function level has become a market-level dynamic that requires a system-level response.

Marketing did not become broken. It became the first place you could see that everything else was.

The metaphor is older than the industry. In coal mines, canaries were carried underground to detect invisible danger. They were more sensitive to toxic gases than the miners themselves. When the canary stopped singing, it was not the problem. It was the signal. Marketing’s collapse into execution was not an isolated failure. It was an early warning.

The organizations that separate will not be those with the most sophisticated execution. They will be those that learn fastest, adapt continuously, and build the capacity to understand what matters now.

“You’re sure to get somewhere, if only you walk long enough.” The question is not whether you will arrive. It is whether you are willing to trade predictable progress for the ability to decide where progress should lead.

This is the final article in a series on how SaaS marketing lost its strategic foundations. The complete series is available on this Substack. If this framework has changed how you see the system you operate in, subscribe and share it with the people who need to see the pattern.

← Previous essay

The Reversed Fibonacci #4: Win the Quarter, Lose the Market

You're up to date.

The next essay drops every other Tuesday.

Keep reading